As a tech company, at HawkSoft we’re keeping a close eye on all the recent AI developments with language processing tools like ChatGPT. Like many businesses, we’re experimenting with ways these tools can help us be more efficient and productive in our roles. As a member of the marketing team, I’ve been looking at how they can be used in the content generation process for emails, presentations, and long-form content.

We thought it might be helpful to share our early observations with you. As business owners, you may be wondering how you can use these tools in your own agency. Every organization will need to decide on whether and how AI fits into their needs and workflows. HawkSoft is still working on defining our AI adoption strategy, but discovery and experimentation are the first steps of the process! Here are my observations on the different platforms I tried, and my learning from experimenting with creating content from beginning to end using AI.

In this article:

AI content tool comparison

There are tons of AI tools out there. I only tried three basic, free chat tools to start: ChatGPT by Open AI, Bard AI by Google, and Bing Chat by Microsoft. There are also tons of other tools out there (both paid and free) that add an extra layer of technology on top of these solutions to do even more.

Different tools will produce different results, and even these three tools continue to change every day, so keep in mind that these initial observations are point-in-time and not set in stone. Here are my thoughts on each of the tools, and how they performed in several overall categories.

ChatGPT

As a disclaimer, my team does have a paid subscription to ChatGPT, which gives us constant access to the tool (otherwise you may have to wait if too many others are using it) and provides access to ChatGPT-4, the most recent version of the tool. However, you can still use ChatGPT-3 for free.

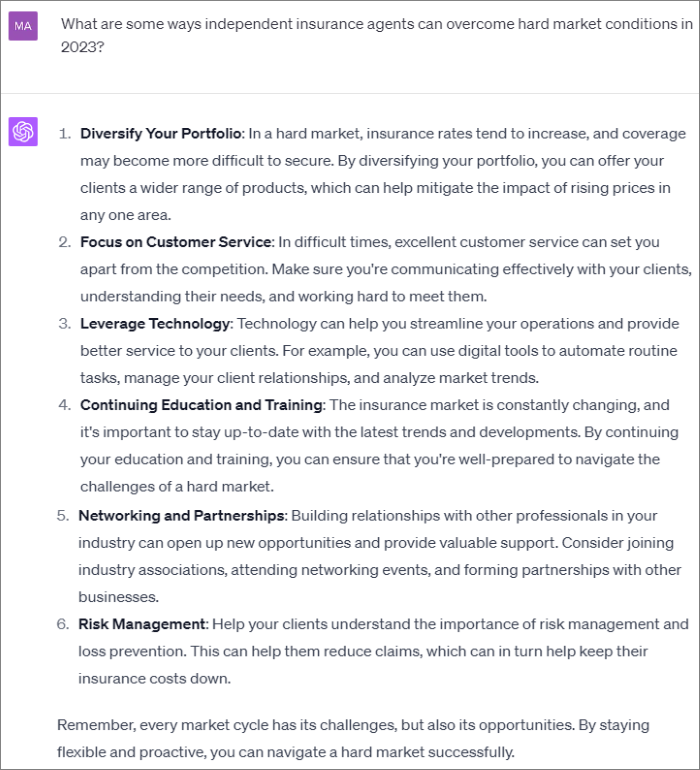

I found ChatGPT to be the most robust of the tools I tried, which is unsurprising considering it’s the one that started the craze. I thought it provided the most sophisticated written responses, and was impressed with how easy it was to save and review past prompt threads. However, I felt it did a poor job of citing sources compared to the other platforms.

- Content quality: 4/5

I found this to be the best platform for generating actual written content, rather than just suggestions, overviews, or links to articles. While it tended to provide pretty generic content, I could keep asking deeper questions to get to the level of specificity I was looking for. - Creativity: 3/5

This platform was less conversational than the others, in that it only returned a response related to my specific request (while the others provided additional tips or recommendations). But when I asked it for some creative email subject lines on a topic, I found it provided more creative suggestions than the other two platforms. Prompts also build on each other, making it easy to continue a brainstorm or train of thought across several prompts without writing out a complete prompt each time. - Prompt History: 5/5

This platform did the best of the three at archiving the complete history of all my prompts and the responses generated. It organized prompts in separate threads that I could rename and easily return to later. You can even share links to specific chat threads with others. - Source Citations: 2/5

The major drawback of this platform in my opinion is that it rarely provided source citations in its responses. One of the times it did, I found that much of the response was taken directly from that article, which made me wary of how much of its responses are actually original content. When I asked it specifically to provide links to articles on a subject, it would provide a list of links with no context (less helpful than a Google search). It was also unable to provide statistics to support its statements when asked.

Example of a prompt and response from ChatGPT-4

Example of a prompt and response from ChatGPT-4

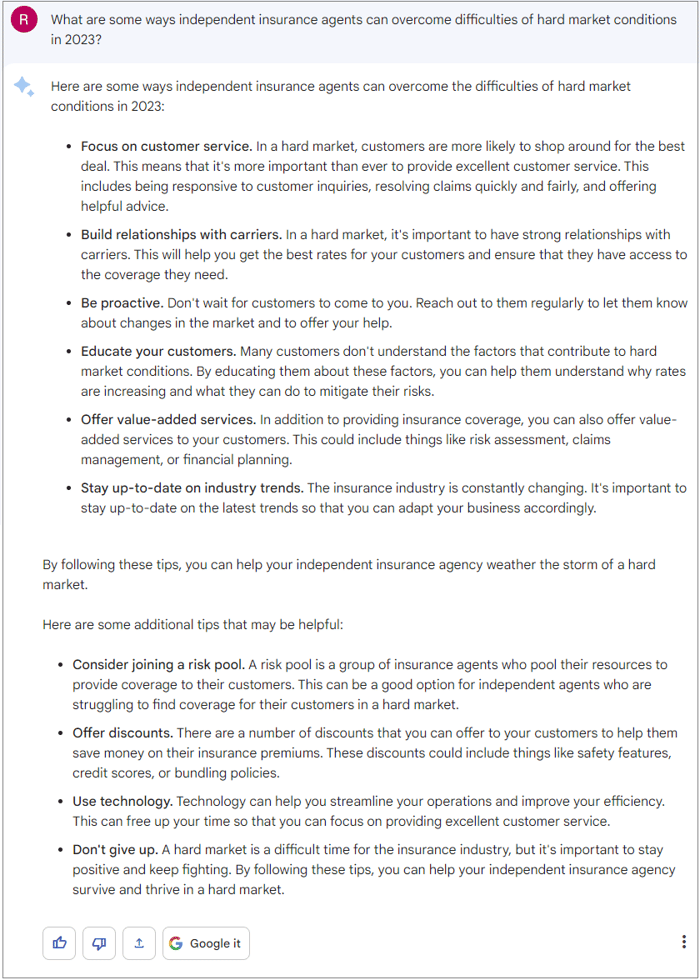

Bard AI (Google)

I found that this platform functioned like a search engine on steroids (which makes sense coming from Google). It’s more conversational that ChatGPT, providing additional tips and recommendations beyond my specific request. While this tool could be good for brainstorming and research, I found it didn’t have all the functionality I was looking for with content creation.

- Content Quality: 3/5

This tool provided responses in the form of tips, recommendations, or bullet summaries more often than paragraph content, so it would likely take more work to turn into final written content. I liked that it lets you toggle through different “draft” versions of its response. - Creativity: 2/5

For being a more conversation tool, I was surprised to find the creativity of the content it generated to be lacking. For example, when asked for creative email subject line ideas on a topic, it gave very formulaic suggestions (e.g. “Grow your agency with our powerful agency management system”). When I asked it to rewrite a summary paragraph in a more casual, creative tone, it only changed a few words of the original response. - Prompt History: 2/5

Bard allows you to choose whether or not to save your prompt history, and you can easily delete the history for a chosen time range. However, it saves only the prompts you entered, not the response it provided, which I found unhelpful. - Source citations: 3/5

Bard was hit and miss on providing source links for the information I provided—sometimes it provided links, and other times it didn’t, and sometimes it recommended links to Google searches on related topics. When asked to provide a supporting statistic, I had to be very specific to get a response: What are some statistics on the importance of password security? yielded no results, but what percent of businesses had a data breach in 2023? brought up a 2023 survey result.

Example of a prompt and response from Bard AI

Example of a prompt and response from Bard AI

Bing Chat

Bing Chat was closer to Bard than Chat GPT, acting as a more conversational and search engine driven tool. While I don’t think it’s set up ideally for content generation, it gets top marks for providing source links on every response, so I’ll definitely be using it as a research tool in the future.

- Content Quality: 2/5

This tool doesn’t seem to be geared toward content generation. When asked to write a blog post on a topic, for example, it says it can provide research and ideas, but not write the content. It appears to summarize or suggest content from the internet rather than generate its own original content. Prompts also don’t seem to build on each other – for example, when I asked it to “expand on this” after the initial prompt, it didn’t know what I was referring to. - Creativity: 3/5

Bing Chat acted like a virtual assistant, providing additional tips and recommendations in addition to responding to the specific prompt. I noticed it recommended sponsored products, which I didn’t see in the other tools. I like that it allows you to choose a “conversation style” that’s either creative, precise, or balanced, which allows you to adjust it for different uses. But even on creative mode, I wasn’t very impressed by its suggestions for email subject lines. - Prompt history: 1/5

Past prompts and responses are not saved. Once you navigate away from the page and return, you’re starting over with a blank page. - Source Citations: 4/5

This tool was the most consistent platform at providing footnotes with sources and multiple “learn more” links for every response.

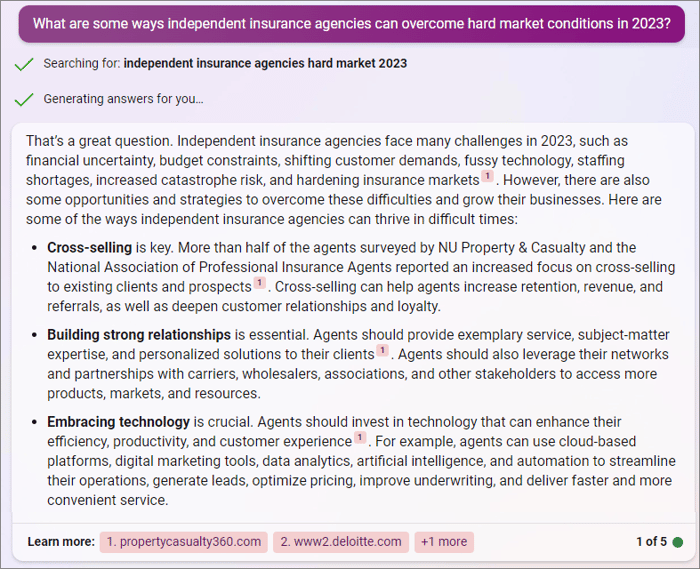

An example of a prompt and response in Bing Chat

An example of a prompt and response in Bing Chat

A content creation experiment with ChatGPT

I was tasked with testing the process of creating a blog article using an AI content generation tool so that our team could determine our thoughts on possibly using such tools to assist with content creation in the future. After experimenting with the three tools mentioned above, I chose to use ChatGPT for this experiment, as I felt it presented the most sophisticated written responses and best features for long-form content.

While HawkSoft is still deciding what rules and processes to put in place around the use of AI content, we wanted to share the process and what we learned from the experiment.

My process

Here's the overall steps of my process for this experiment of creating a content piece beginning to end.

- I gave ChatGPT a specific prompt for each section of my article outline.

Initially, I prompted ChatGPT to write a 1,000 word blog on the chosen topic (how agency management systems help independent agencies manage ACORD Forms). I found that the response generated was too brief (only around 400 words) and too generic to provide useful education as a blog article, so I couldn’t use the response as it was.

I’d found that ChatGPT provided much better information when given more specific prompts, so next I used my outline for the article to enter a specific prompt for each section of the article (what are ACORD Forms, what are the most common forms, what are the benefits of using ACORD Forms, how do agency management systems assist with ACORD Form management). Sometimes I had to ask several specific questions or keep drilling down further until I had the information I needed for each section. I ended up doing 9 total prompts for an outline that had 4 main sections.

- I organized the generated responses, edited and rewrote the content as needed, and added additional clarifying content.

I pasted snippets of the different generated responses together to form the content of the article. Since the AI generated content tended to be wordy, formulaic, and repetitive, I didn’t use much of the AI generated content as it was. I used it as the building blocks for the piece, but tweaked or rewrote the content to be more clear, concise, and direct, and to connect the different thoughts together. This part took quite a bit of elbow grease – it definitely wasn’t a simple copy/paste process.

I also added some of my own content, especially in the section speaking specifically to HawkSoft’s features for ACORD Form management, since ChatGPT didn’t have as much knowledge as I did in that area.

- I fact checked where needed and added source links.

Since the topic of this piece was fairly basic and I already had some pre-existing knowledge of the material, there were only a few places where I needed to double check the information that ChatGPT had provided (e.g. facts on the history of ACORD). I did some online searches and cross-referenced some things against the ACORD website, adding a few links to the piece that might be helpful to others reading the piece who wanted to research the topic further. With a more technical topic, this process might have been a lot more laborious, since ChatGPT doesn’t always provide source links.

Interested in what the finished piece looks like? You can check it out here.

My thoughts and findings

While AI tools can undoubtedly speed up the content creation process, they certainly aren’t a one-click solution for creating quality content—at least not at this point. I see tools like this assisting (thankfully not replacing) seasoned writers and content creators. Here are a few of my tips from this experience for others who may be looking to dive in!

Better prompts = better responses

As with any other technology, better input produces better output. Be as specific as possible in your prompts, and don’t be afraid to keep prompting or regenerating the responses until you get what you’re looking for. In my experience, multiple specific questions around a topic yielded better results than one over-arching question.

Cite sources and check facts!

The tools I tried didn’t always cite where they were getting the information they provided, and even when they did it was unclear which parts were taken from the source and which were generated by the tool. In one instance, I checked the provided source and found that much of the tool’s response was taken straight from that article. It’s crucial to acknowledge where the content originally comes from, and not present it as your own original content (which is plagiarism).

I tried running AI generated content through a few free plagiarism and AI content detector tools in an attempt to determine whether the AI generated content already existed elsewhere online, but got inconclusive or incorrect results. We’re still in the early days of AI content generation, so hopefully measures to identify and prevent plagiarized content—and protect the intellectual property of those who created it—will continue to improve.

Also keep in mind that you shouldn’t take information generated by AI tools to be fact, especially if source links aren’t provided. AI tools are trained using information from all over the internet—and we know not everything we read online is trustworthy. Check that any provided source links are creditable, or do a search to double-check the information if no source is provided.

Use AI content generation tools as a starting point, not an endpoint

I found AI tools to be most helpful in the earlier stages of the writing process—getting ideas and suggestions on topics, brainstorming titles and subject lines, performing preliminary research, and creating outlines, talking points, overviews, or summaries. From there, work is likely still needed from humans to make it your own and turn it into a quality final product.

Whether AI generated content can (or should) be used for final written content is ultimately something each organization must decide for itself depending on their individual needs and views. As I said earlier, HawkSoft is still deciding what that looks like for us, but we’re excited to keep experimenting with new ways to use these innovative new technologies.

Learn about more uses for ChatGPT in insuranceCheck out our guest blog by agent David Carothers on how ChatGPT is changing the game in the insurance industry. |